Introduction

Idea

We normalize the outputs of a layer so they have zero mean and unit variance. We’ve already done this in linear classification and will work the same way in neural network

Why it works?

When we draw the contour map for loss function, we’ll find the width and length of it looks like and oval instead of circle. This makes it super easy to overshoot

Hence, after we apply normalization to the input, the contour map for loss function will become a circle, which makes it more efficient and easy to optimize

Mathematical Expression

Gamma and Beat

After normalization, the output will go through activation function (e.g., ReLU). The normalization process will let the output extremely hard to go through, thus we introduce and after normalization to adjust the output’s distribution

Test Process

After training, we’ll only feed one test data into the neural network as input, normalization one example is meaningless. Hence, we’ll use the average mean and variance we’ve calculated during the training process when using the model

Using these constant makes the normalization process become the equation below making it a linear classifier.

Usually we insert normalization after fully connected or convolutional layers, and before the nonlinearity, i.e., activation function

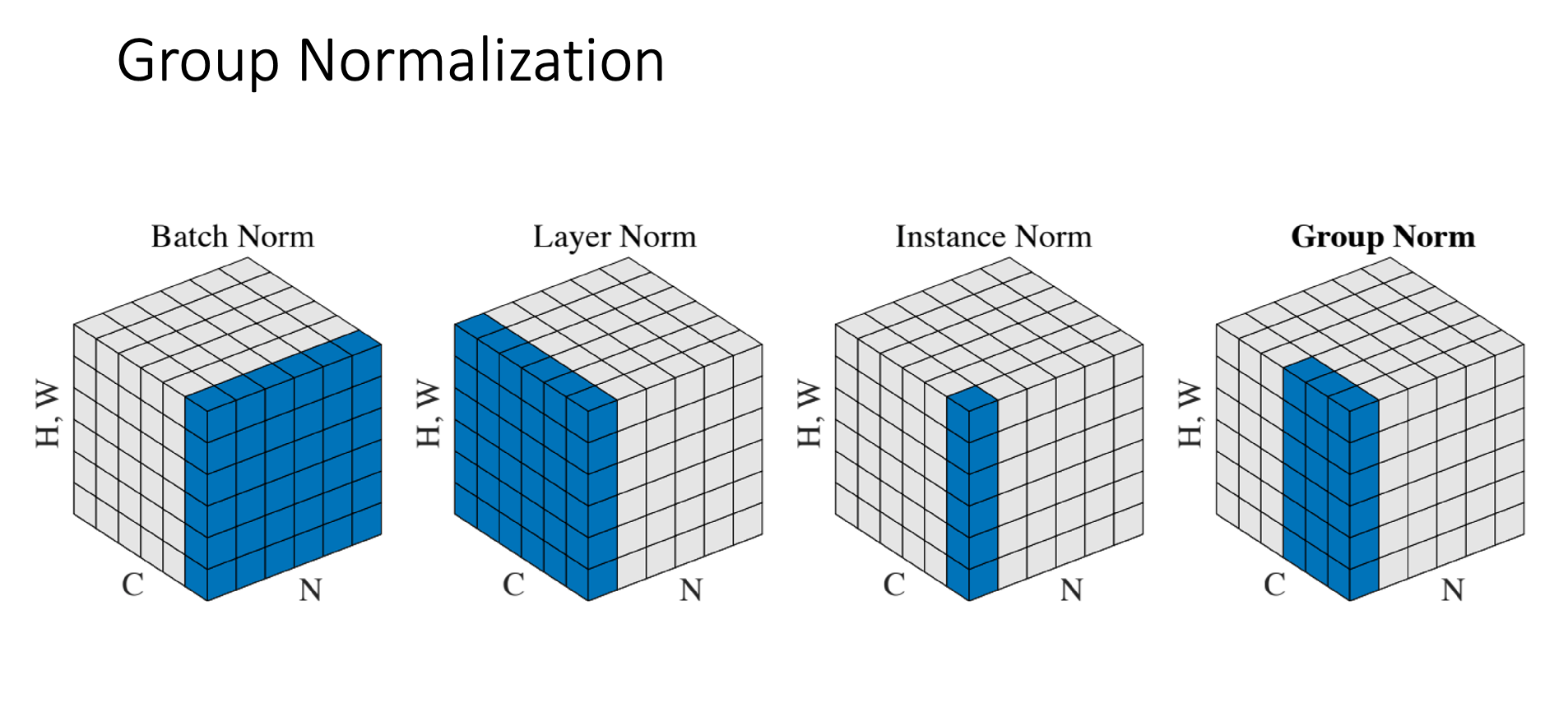

Different Kinds of Normalization

Batch Normalization

In batch normalization, we’ll normalize over one channels at a time, but with all examples and all spatial positions

That is, , , , and all have the same size , which is the number of channels

Layer Normalization

Normalize across all features within one sample at a time, i.e., normalize across

, , , and all have the same size , the number of examples

Seldom use in CNN, since each channel in CNN isn't related

Instance Normalization

Normalize across each one channel in one example at a time, i.e., normalize across

, , , and all have the same size

Group Normalization

It divides channels into groups and normalizes within each group. Instead of normalizing each channel separately (like Instance Norm) or all channels together (like Layer Norm), it normalizes a few channels at a time by grouping them. It normalize area has the size