Transformers

Introduction

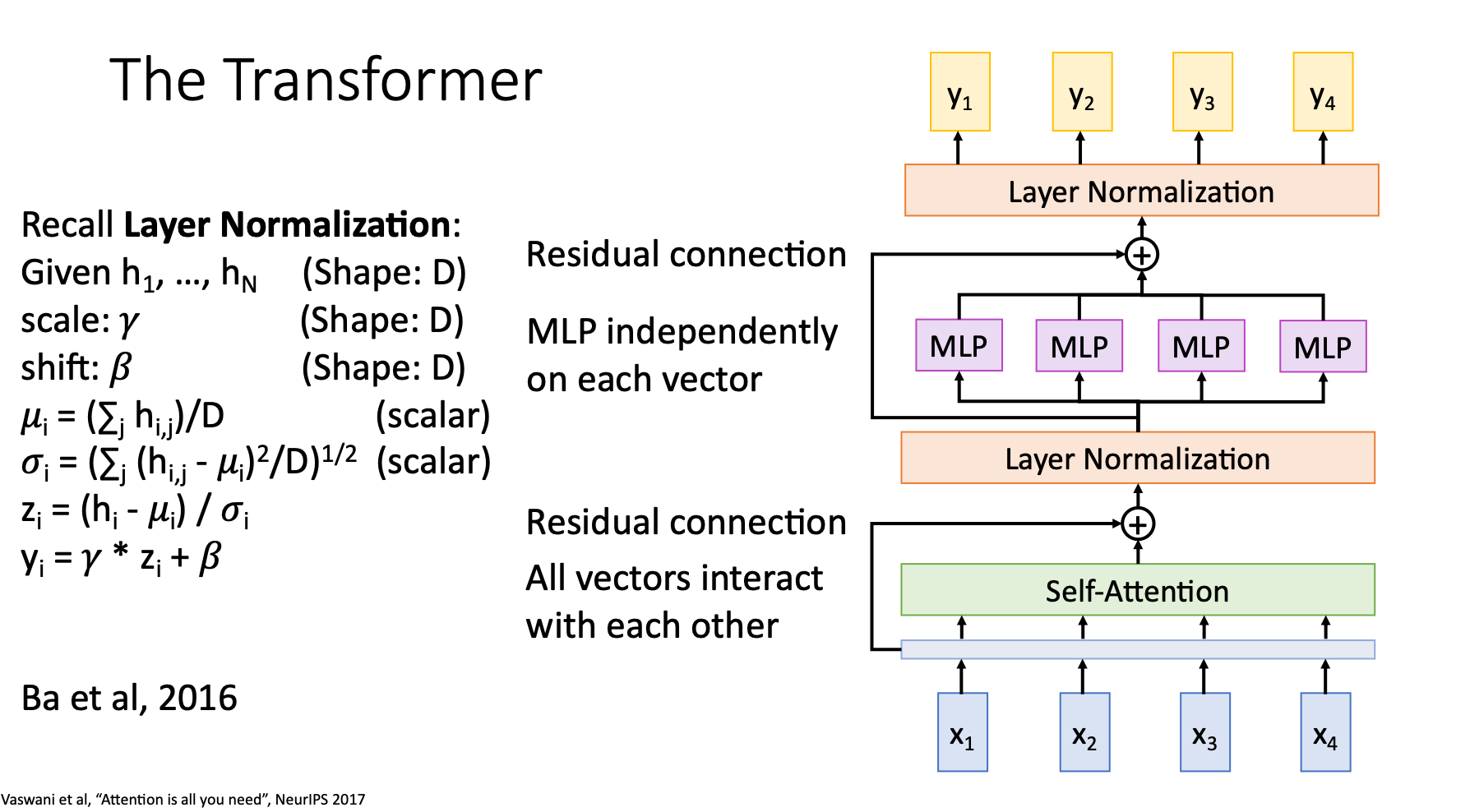

In the well-known paper “Attention is all you need”. The author points out that the only layer we need is the transformers. By stacking transformer, we don’t need RNNs and 1D Convs

Structure

- MLP is multi-layer perceptron, also known as fully-connected layer

- Residual connection let the layer better simulating other kinds of layers with simpler structure (ResNet)

- Layer Normalization

Importance

We say transformer is the “ImageNet moment for NLP”

That is, after ImageNet come out, we can first train our new model on ImageNet, then conduct transfer learning on the model

The general transformers trained by big companies using large dataset can also be finetuned easily for our own NLP tasks