Concept

Innovations

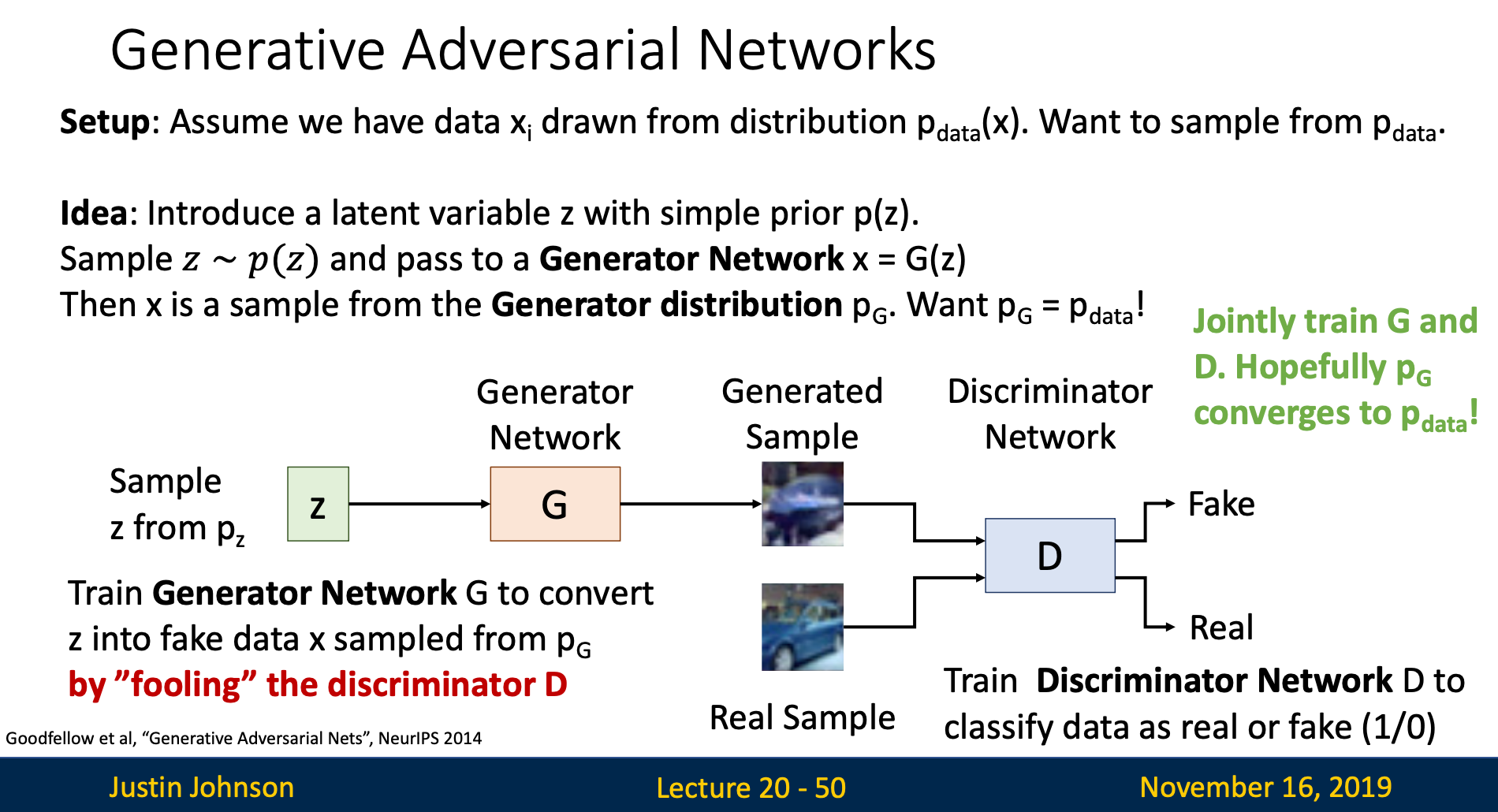

Traditional generative models like autoregressive models and VAEs optimize by maximizing the likelihood of reproducing training data

GANs introduced a revolutionary approach: completely abandoning likelihood calculations and instead using a discriminator network to judge whether images look real. This enables GANs to focus purely on producing realistic images

Steps

Step 1: Sample

We sample a random noise vector from a prior distribution , typically .

Step 2: Generator Network

The generator network transforms the noise into a synthetic image: .

Step 3: Discriminator Network

The discriminator is a binary classifier that distinguishes between real training images and fake generated images, outputting a probability that an input image is real.

Step 4: Adversarial Training

Both networks are trained simultaneously: the generator tries to fool the discriminator by creating more realistic images, while the discriminator tries to better detect fake images. This adversarial competition drives both networks to improve.

Training Objective

Objective

Expression

We jointly train generator and discriminator with a minimax game

Reason

is the probability distribution that generates training data. When minimax game reaches global minimum,

Which means that the generator can generate images that perfectly resemble training set

proof in slides

Explanation

First Expression

The discriminator wants for real data, thus it wants to maximize the expression above

Second expression

For Discriminator: When input generated images, we want , thus we want to maximize this term

For Generator: The generator wants to generate images that can fool the discriminator, i.e., , thus want to minimize the term

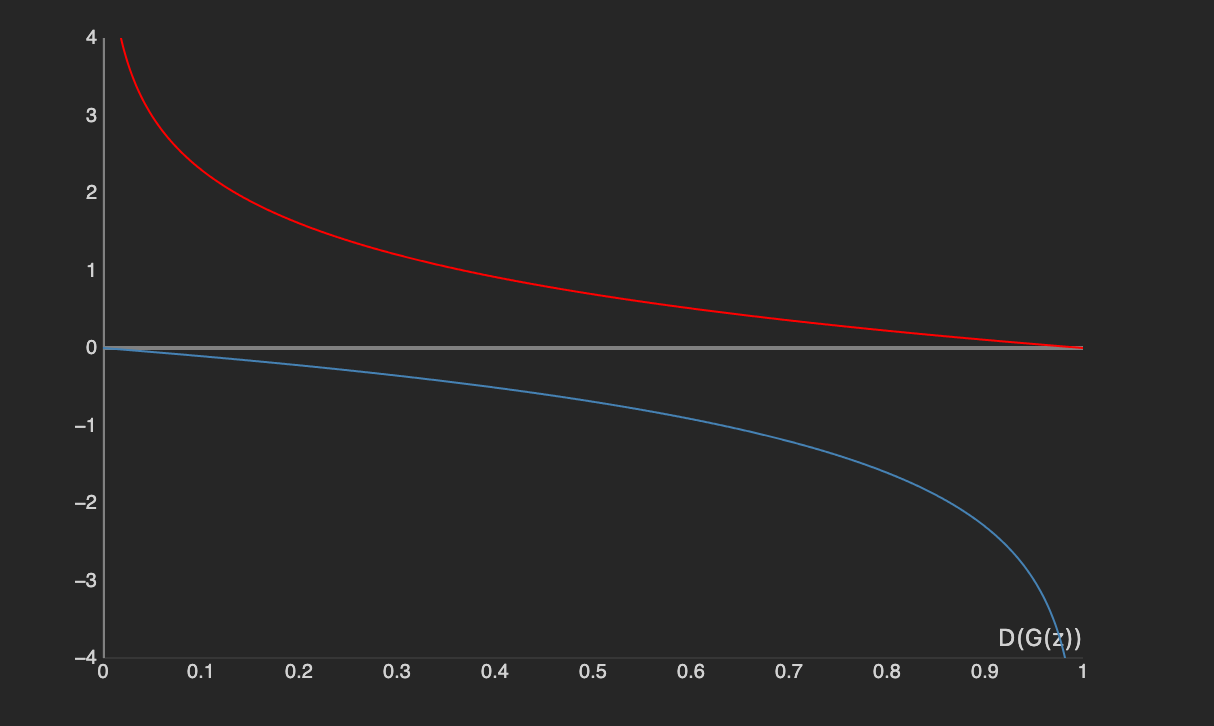

Problem & Solution: gradient vanish at the start of training

At the start of the training, generator is very bad, thus . This makes ‘s gradient vanished

Hence, instead of train G to minimize , we train G to minimize

Alternating Gradient Update

Challenge

Because of minimax game, parameters belong to discriminator want to maximize the expression, while those belong to generator want to minimize the term

Thus, we aren’t able to update all the parameters in GANs simultaneously

Solution: Alternating Gradient Update

The parameters are split into two groups. For every iteration, we only update one group of parameters and the other group stay the same

Why can't we update them at the same time

Think of and as two people. wants to climb uphill and wants to climb downhill. If we update them together, we’ll be moving the same function at opposite direction at the same time

Problem

In normal neural networks, we can draw a loss curve to identify whether our model is performing well.

However, in GANs, discriminator want to maximize the expression while the other wants to minimize. Hence, the expression’s curve will bounce up and down, making us hard to determine whether the model is working well